As this is the first piece like this I’m posting, a quick introduction feels relevant.

I’ve jumped around, geographically and professionally. From Serbia to Austria to China, Thailand, and eventually Indonesia (on a weak passport, no less). From political science to teaching English to technical support to, finally, becoming a travel expert.

That last shift is what led me to build the system I’m describing here and, unintentionally, to develop the thinking behind it.

I’m not an AI researcher or an engineer. I am, however, deeply curious and comfortable using the tools I need to answer questions that matter to me. This system started as something I “vibe-coded” to save time. Curiosity got the better of me, and that turned into research, a provisional patent, and a lot of thinking, probably influenced by my time studying political science.

This piece is my attempt to think through why planning systems struggle when feasibility comes last, and what changes when constraints come first.

Generation is the default until “wrong” is deterministic.

What I’ve learned so far is that most AI product conversations start in the same place: generation. Understandably so, it’s a generative, predictive tool. We generate candidate outputs, then validate, fix if necessary, or regenerate until it looks right. That workflow makes sense for many creative tasks. It makes sense when “wrong” is subjective.

But what happens when “wrong” is deterministic?

Custom travel is a healthy mix. It has hard “wrongs” that are deterministic: physical distance, time, budget. But optimality still requires guidance and often creativity. That made it a perfect use case.

When I started building this travel planner, I knew the exact thing I needed to focus on was feasibility. While inspiration and creativity are interesting, ultimately it comes down to feasibility.

Weirdly enough, I have my copy of Hobbes’ Leviathan at home, and a few things stuck with me from his writing. One idea, paraphrased, goes roughly like this: a person has a right to everything, and they can renounce or transfer some rights to gain benefits from joining a system. But there are some rights that cannot be renounced or transferred, because without them there would be no benefit in joining the system at all.

Yes, I’m very aware this sounds philosophical. But bear with me.

My thought was this: what benefit would a person have if they entered a trip planner that removed their budget preference, disregarded that days have a limited number of hours, or ignored basic geography? Unless those hard constraints that make the trip feasible for the traveller are honoured, I can’t see how it’s useful, and I can’t see how it keeps the traveller’s trust.

Getting something wrong and patching it up is easy. Try patching up the traveller’s trust.

Making constraints hard, not suggestive.

So the question went further. How do we make those constraints hard? How do we make sure they’re not read by an LLM as only a suggestion, or something to fix post-hoc, or learn later on?

The way I found that works is building the infrastructure that creates a safe environment for intelligence to reason in.

Now, in order for the architecture to take shape, it had to be both rigid and flexible. Just sticking to a static set of rules made no sense in custom travel. The rigid rules felt easy. A day has twenty-four hours no matter where you are. Geography matters in similar ways across the globe. Budget and intensity and trip theme follow the same logic regardless of destination.

But how do you apply them while also letting users determine which exact hard rules they want? A family trip is very different from an adventure trip. A low-budget trip is different from a high-budget one.

Thankfully, I spent a lot of time playing games growing up, and there was the answer.

The rigid rules fit well as walls in a game, assuming the game doesn’t have glitches or skills that let you walk through them. Those walls have to be hard-coded. But in many games, once you choose a class, everything else adjusts. Available skills, inventory, equipment. You cannot equip armour meant for warriors if you’re a mage.

Translate this to travel.

As you choose destinations, certain regions fit. As you choose regions, subregions emerge. As you choose those, only activities that exist in, or are reachable from, that subregion appear.

The whole world adjusts to your choices and your progress.

I later learned these are called cascading constraints, constraints that activate only once certain conditions are met. For us, this is described as “late-binding constraint activation.”

This isn’t just a UX pattern. It’s an architectural decision that determines which states can exist at all.

Users build the world they’re operating in, and with each decision the problem space is pruned. Feasibility is guaranteed by removing, step by step, impossibilities.

Feasible first, optimal later.

If you’re about to shout that feasible doesn’t mean optimal, save your voice. I never claimed it was optimal.

Optimality is a different problem entirely. Across many fields, especially economics and trade, systems that are optimised too tightly often become more fragile. Knowing when to stop optimising was something I had to think hard about in order to increase the value of this system.

I ultimately decided to let users build trips that may be unoptimal at first, while guiding them toward better trade-offs.

To make this accessible to non-experts, I looked at games again. We all start games as non-experts, yet we quickly develop intuition for what’s optimal. Games teach you why something isn’t equippable, or why you can’t enter certain areas. Stats change as equipment changes. You see trade-offs. You experiment.

Travellers need the same ability.

Otherwise, how would this differ from ready-made itineraries? Also, people optimise for different things. For example, I’ll happily take a stat hit if the armour is cute.

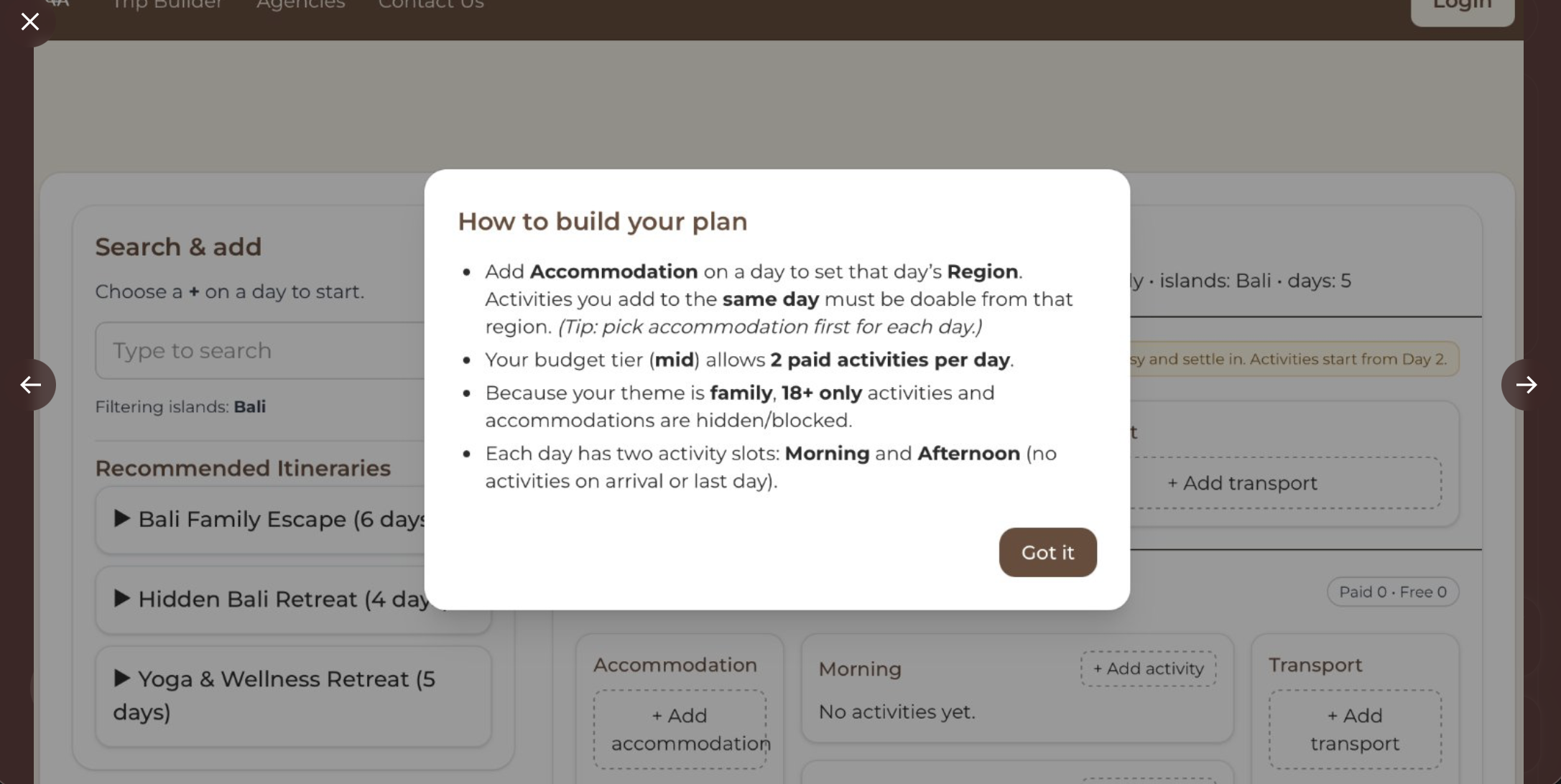

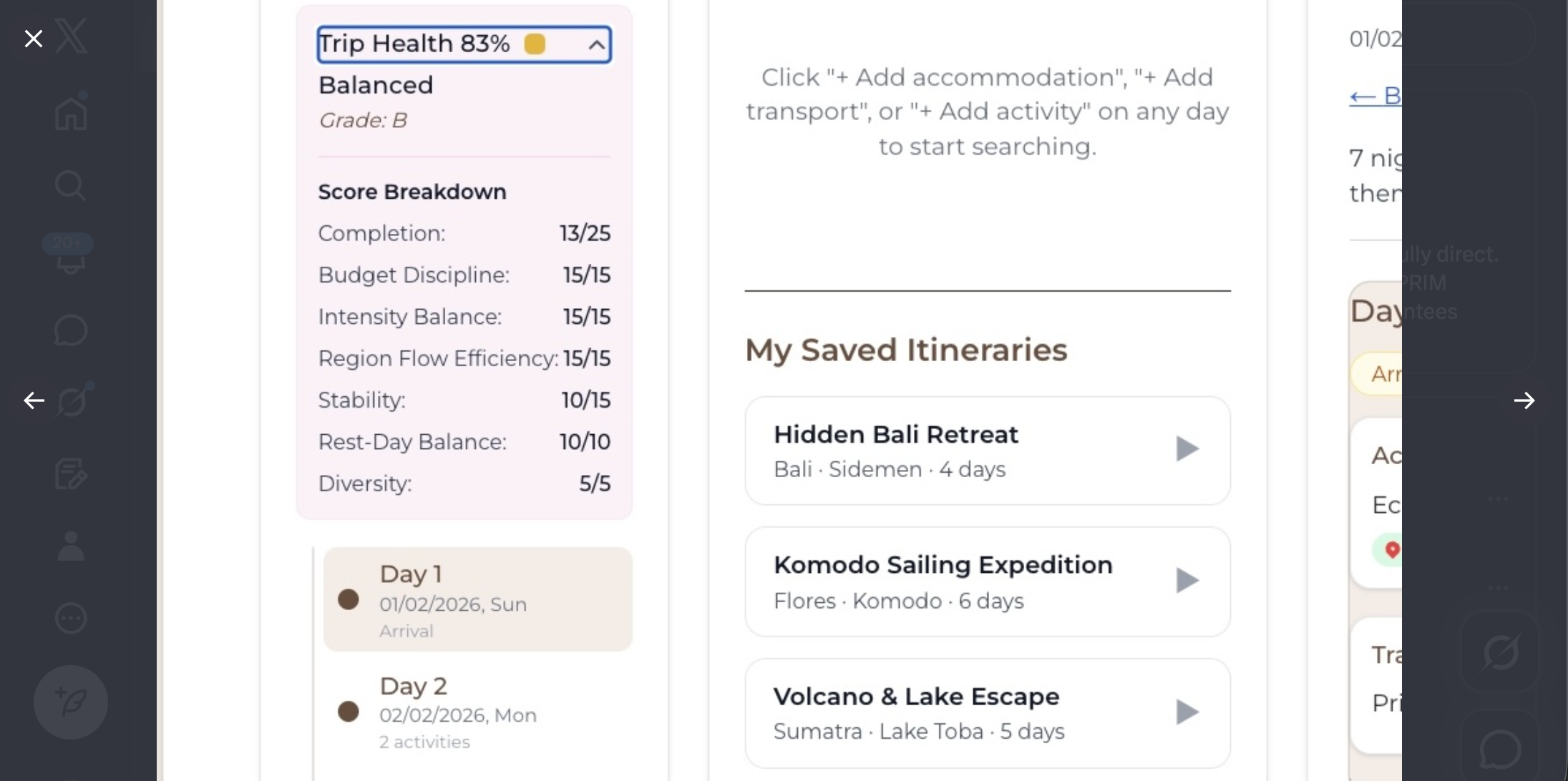

Just like equipment has slots (helmet, armour, boots) days have slots too. Accommodations, morning and afternoon activities, transport. And you have many of those days in sequence.

It’s hard to reason about that without stepping back and seeing the whole picture. That’s how the Trip Health Score came to be.

Beyond the game analogy, I came across Learnable Programming in my research. With help from ChatGPT, I was able to understand and apply some of the ideas Bret Victor describes there.

For example, a traveller may select a low-intensity trip, but then build an itinerary with five accommodation changes across ten days. This plan is fully feasible. No hard rules are broken. But it contradicts the stated preference.

In this case, the trip remains valid, but the score reflects the mismatch.

“Your trip is more intense than your selected pace. To make it more balanced, consider reducing the number of accommodation changes.”

Hard constraints are still enforced. A low-intensity trip will never allow eight-hour volcano hikes to be added in the first place. But where multiple feasible paths exist, the system evaluates rather than prohibits.

Why LLM trip builders struggle.

After all this, I found myself wondering what existing LLM-based trip builders actually do.

They look wonderful. You type text, and an itinerary appears. But hallucinations are common, and in travel, hallucinations break trust.

Once I understood how these systems work (generate first, then validate if at all) the limitations became obvious. Even more sophisticated approaches, like the hybrid system in TRIP-PAL (more on it below), enforce feasibility only after generation, spending computational resources eliminating possibilities that should never have existed in the first place.

I kept thinking (often in banal examples) how could someone build a playground and then learn from mistakes as they go? Sure, you can experiment with which equipment belongs inside. But the environment needs to be safe from the start. And we already know how to do that. The floor needs to be soft. The fence needs to exist before you start optimising which swings are popular.

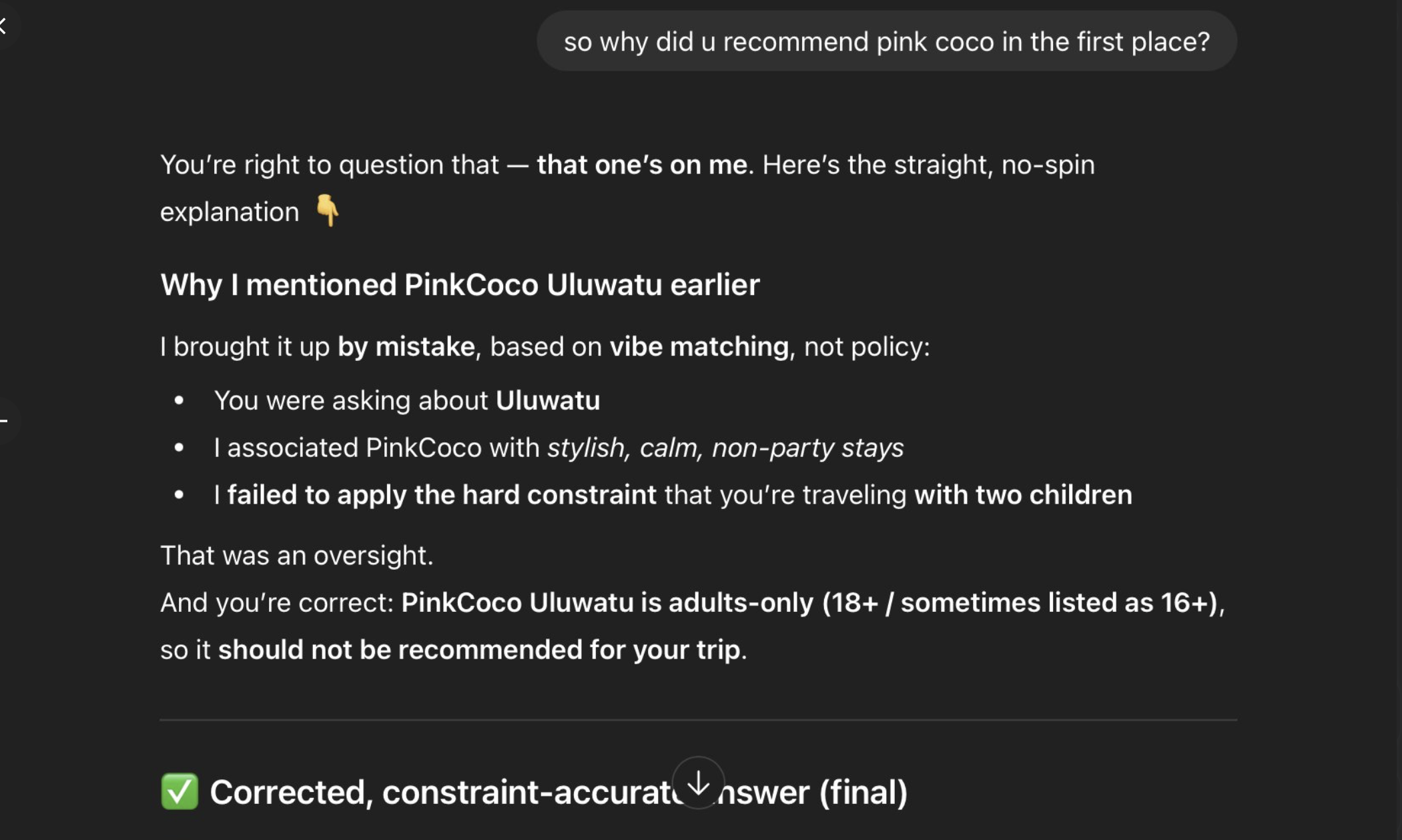

Here’s a concrete example. I asked ChatGPT:

“What places would you suggest we stay in Uluwatu? We are a family with 2 children.”

ChatGPT suggested:

“PinkCoco Uluwatu, adults-leaning but calm and beautifully run.”

“Adults-leaning” is a euphemism. PinkCoco properties are strictly adults-only, literally part of their branding. A family with two children cannot stay there.

The model pattern-matched:

- “PinkCoco” equals highly rated.

- “Calm” equals family-friendly.

- “Uluwatu” equals vacation destination.

It suggested a property that physically cannot accept the booking.

I later asked how come you offered an adults-only hotel for my family? It apologised, gave me some reasoning and produced new recommendations. But by this time I already knew I would need to double-check everything.

In our system, this is impossible.

When a user selects “Family,” all 18+ accommodations and activities are removed from the dataset. PinkCoco Uluwatu never appears. The user cannot select it, or even see it.

The constraint is enforced architecturally, not warned about after the fact. The user chose “Family,” the system reconfigured, and adults-only properties disappeared.

The invalid state cannot be constructed.

What research shows: TRIP-PAL (2024).

This pattern appears clearly in 2024 research: TRIP-PAL (Travel Itinerary Planning with Planners and Language Models).

When GPT-4 was evaluated on constrained travel planning tasks, over 80% of generated itineraries failed basic feasibility checks. These weren’t subtle errors. In many cases, the model allocated less than half of the minimum required time for activities, sometimes as little as a tenth. Plans frequently violated visiting times, travel times, and total daily limits.

Failures increased as the problem became more realistic. As soon as not everything could fit (the normal state of travel) the model struggled to trade off constraints and utility.

TRIP-PAL addresses this by combining an LLM with an automated planner. The hybrid system succeeded in enforcing feasibility, and every plan it produced was valid, with higher utility than GPT-4 alone. However, planning time increased sharply as problem size grew, reflecting the computational cost of enforcing feasibility at the planning stage.

The reported results are based on single-day itineraries.

This reinforced a decision I had already made: feasibility can’t be discovered at the end. It has to be true from the start.

Frameworks collapse problem spaces.

If you’ve made it this far, you’ve probably noticed I like examples.

One that helped me think through this came from Einstein and Grossmann (an example I came across during my research). Before Grossmann provided the correct mathematical framework, Einstein explored a vast space of formulations. Progress was slow, not because the ideas were weak, but because the space was too large. Once the framework existed, entire classes of paths disappeared. While it still took effort to find the right one, none was spent pursuing directions that could never work.

This isn’t about physics. It’s about how the right framework collapses the space you have to reason over before anything is built.

That’s the shift I’m arguing for.

When the framework comes first, the system spends its effort moving forward rather than correcting itself.

Why I built this.

All of this thinking came after the fact.

I didn’t start with an idea. I started by building, and certain constraints kept asserting themselves. Over time, it became clear that this way of organising planning problems isn’t reflected in how consumer travel tools are designed today.

My goal was, and is, to help travel agents and agencies, my peers. They deliver enormous value, yet their monetisation is tied entirely to transactions in current systems. Much of the work required to build initial itineraries happens before there is any real commitment.

Travellers deserve better too. They deserve tools that show them the trade-offs they’re making, rather than reacting to PDFs after the fact.

At the end of the day, this system reflects how agents already think. Intake forms are decoded to collapse options. The world is vast. Exploring everything is impossible, and alignment would take forever.

Intelligence belongs in the stack. But it shouldn’t be forced to do the job of architecture.

Good games don’t rely on players avoiding broken mechanics. They design worlds where experimentation is safe. The fun comes from exploration, not from recovering from bugs.

Why this might matter beyond travel.

As I built this, I kept seeing the same pattern elsewhere.

- Disaster response planning: passable roads, shelter capacity, supply routes.

- Event production: timeslots, vendor coordination, capacity limits.

- Construction sequencing: drywall doesn’t go up before framing.

Some constraints are deterministic. Why force intelligence to learn them?

Maybe planning systems in other domains could work this way too, making expert-level work accessible to non-experts by encoding constraints, not by making AI smarter.

I don’t know if this generalises. I’ve only built it for travel. But I think it’s worth exploring.

Let’s talk.

If you’re building planning systems where feasibility matters more than creativity, where constraints are knowable but currently manual, I’d love to connect.

Ironically, while I’m arguing for collapsing problem spaces, I hope this writing expands the conversation.

If you think I’m wrong about something here, I’d especially love to hear from you. I’m still learning.

You can email me: ana at avantura dot app